Live Coding Control of a Modular Synthesizer with ChucK

by Daniel McKemie

Leveraging computing power to generate and process control signals in the modular synthesizer is one that has a storied, yet forgotten past. From the first musical realizations of the 1950s until the 1980s, computers could take days to generate only a few minutes of music. In the late 1960s, ‘hybrid systems’ like Max Mathews’ GROOVE and the Roland MC-8 microcomposer used analog synthesizers to generate sound, while relying on computers to calculate the musical events. With the introduction of digital FM synthesis in the Yamaha DX7 in 1983, these systems all but went away. Today, with the affordability and rising popularity of analog modular synthesizers and the engagement of computer music performance enabled by live coding, linking these two worlds makes for very compelling live music. The writings offered here explore the benefits in using ChucK as a live coding tool for generating and processing control signals, altering and distributing audio effects to different parts of the synthesizer on the fly, and using the computer to map and monitor signal inputs and in turn conditionally create ever changing interactive performance environments.

INTRO

The purpose of this article is to encourage more musicians to explore a hybrid system of live coding/computer software and modular synthesizer, and to expand the capabilities of each part by using the other. It is the opinion of the author, based on personal experience, that standing against certain musical and/or computational tools for extramusical reasons (ie. a purist approach) is unnecessarily prohibitive. The author also feels that the artist should, first and foremost, work with the most effective means that are not just available, but that they feel enthusiastic about, while at the same time welcome changes to their philosophies and outlooks as they arise

HISTORY

Early computer music works took hours, or sometimes days to realize a small amount of music, and it was not until the 1980s, that personal computers became affordable and powerful enough to generate complex synthesis. Hybrid systems, in which the computer handled the compositional and algorithmic functions, and the analog synthesizer produced the resulting audio represented a significant leap in compositional productivity. This began with Max Mathews’ GROOVE system in 1969, which monitored performer actions on a voltage-controlled synthesizer, which could then be edited and recalled on a computer and saved for future use. The Roland MC-8 was another important piece in that it was a commercially available computer attached to a CV/Gate output interface. The idea was that notes and events could be programmed in the computer and assigned an output through the interface to the modular synthesizer. This device, in concept, is similar to the setup this article is looking to explore.

HARDWARE CLARIFICATIONS

For the sake of clarity, the differences between audio and control signals will be that audio signals deal with sound and control signals deal with structure. This closely aligns with Don Buchla’s philosophy, in that his instruments mechanically separated the two, however most other modular synthesizers, allow audio and control signals to be used interchangeably. Control signals generally tend to be low frequency signals, triggers and gates, which are generally used to modulate signals and actuate events. Audio signals can be heard and are generated by oscillators and crafted by VCAs, envelopes, and filters. Yet there is much crossover, and these distinctions can get very blurry, and so it is recommended that the reader should not worry too much about these differences.

To achieve maximum versatility in low level signal processing and generation, there are a few components one needs. First, the user needs an audio interface with DC-coupled outputs. This allows the production of steady and/or slow moving to be sent to the synthesizer. A list of DC-coupled interfaces can be found HERE. To prevent damage to the interface itself, one should get floating ring cables with a TRS connection at the interface and TS at the synthesizer. Otherwise one pole of the signal has nowhere to go, causing the ring to short to the ground of the output. While you may achieve the desired effect without these cables, again, use of incorrect cables can cause damage to your interface. One could also use TRS to dual TS, with the positive polarity on one connection and negative on the other. There are plenty of options here, and they are largely dependent on your synthesizer and hardware.

Alternatively, with some simple modifications to the audio cables or by building a breakout box, can achieve the same capabilities. More information on this can be found HERE. For this use case, I will be using a MOTU Ultralite Mk-4 interface with floating ring cables purchased from Expert Sleepers. If one cannot generate control signals in software to send to a modular synthesizer, audio signals are capable of equally engaging results. As stated earlier, CV and audio signals can be used interchangeably!

LIVE CODING

There are plenty of options available for live coding, and more traditional programming languages, each has their own benefits and drawbacks. Having explored Sonic Pi, Gibber, TidalCycles, SuperCollider, FoxDot, and ChucK, found that ChucK, using the miniAudicle IDE, is the most preferred platform for interacting with the modular synthesizer. It should be reiterated that preference is the primary driver in language choice here, especially with the latter points. One is capable of producing all of these results in other languages, though it should be noted that multichannel output is most easily executed in ChucK as there are no additional adjustments needed (say, in the SuperCollider startup file, etc.), other than declaring the specs in the preferences.

It is important to keep in mind that analog circuitry is not exact, including the synthesizer as well as the interface. Interfaces have different behaviors in how voltages are generated and received, and these behaviors can change over time. There are no fixed standards when it comes to voltage behaviors in synthesizers, though sticking to one manufacturer can usually help ease complexities and differences; but even this is not a guarantee. What works with one code bank with one synthesizer may not (and likely will not) work the same way with a different synthesizer. It is the role of the performer to understand their set up and know what needs to be done in order to achieve their desired results.

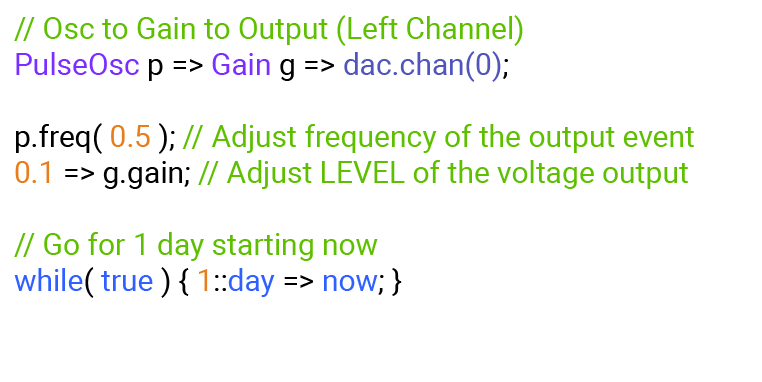

To demonstrate a simple use of sending a control voltage out, the code below represents a waveform with gain control. These two things are fundamental when creating control voltage signals to send to the synthesizer. The gain amount controls the level (or range) of the modulating signal being sent. In the first example, we have a pulse wave going from minimum to maximum every 500ms and being sent at a gain level of 0.1 (on a scale of 0 to 1).

OctavePulse

This output is plugged into the 1v/oct input of the VCO of the modular synthesizer. With the pulse width at 50% duty cycle, we would get a change in pitch twice per second; one at minimum, the other at maximum. For the sake of clarity, let it be said that a gain of 0 is a 2-volt output, and a gain of 0.1 is a 3-volt output. If the VCO frequency at minimum output is 440Hz (A4), and at maximum output is 880Hz (A5), there would be an octave shift happening twice per second. With an adjustment to the frequency knob on our VCO to bring our pitch down one octave, it now has a fluctuation of 220Hz on minimum output and 440Hz on maximum output.

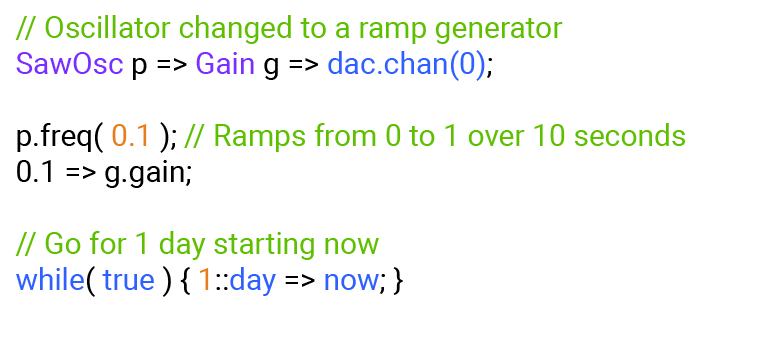

This output is plugged into the 1v/oct input of the VCO of the modular synthesizer. With the pulse width at 50% duty cycle, we would get a change in pitch twice per second; one at minimum, the other at maximum. For the sake of clarity, let it be said that a gain of 0 is a 2-volt output, and a gain of 0.1 is a 3-volt output. If the VCO frequency at minimum output is 440Hz (A4), and at maximum output is 880Hz (A5), there would be an octave shift happening twice per second. With an adjustment to the frequency knob on our VCO to bring our pitch down one octave, it now has a fluctuation of 220Hz on minimum output and 440Hz on maximum output. To broaden the example further, a change in code occurs. The pulse wave is now a sawtooth wave, and the frequency is adjusted to 0.1Hz, (which can be done on the fly) (see code at left).

OctRampDown

OctRampUp

The change in waveform introduces a gradual modifications to the analog signal over time. With the same patching as before, having this signal plugged into the 1v/Oct input of the VCO, with the base frequency at 440Hz; over the course of 10 seconds, we start at 440Hz, glide up to 880Hz, and then suddenly drops back to 440Hz. The same adjustments to gain would still apply here, but now the movements alternate between gradual and sudden. If the frequency of the signal in ChucK were changed from 0.1 to -0.1, the reverse would occur, starting at 880Hz and gliding down to 440Hz, and then suddenly returning to 880Hz. This signal could be routed into any number of patch points on a modular synthesizer, the linear or exponential frequency inputs of the VCO, the cutoff frequency of a filter, the amplitude level of a VCA, into a mixer to mingle with other signals, and on and on. An incredible luxury afforded to the performer when exercising this method of control, is that connections can be reassigned without the need for the player to physically repatch the synthesizer. It is akin to having something such as a digital pin matrix in which the performer can mix and move signals, with no drops in signal as shreds in ChucK can be subbed out and replaced on the fly.

AUDIO SIGNAL CONTROL

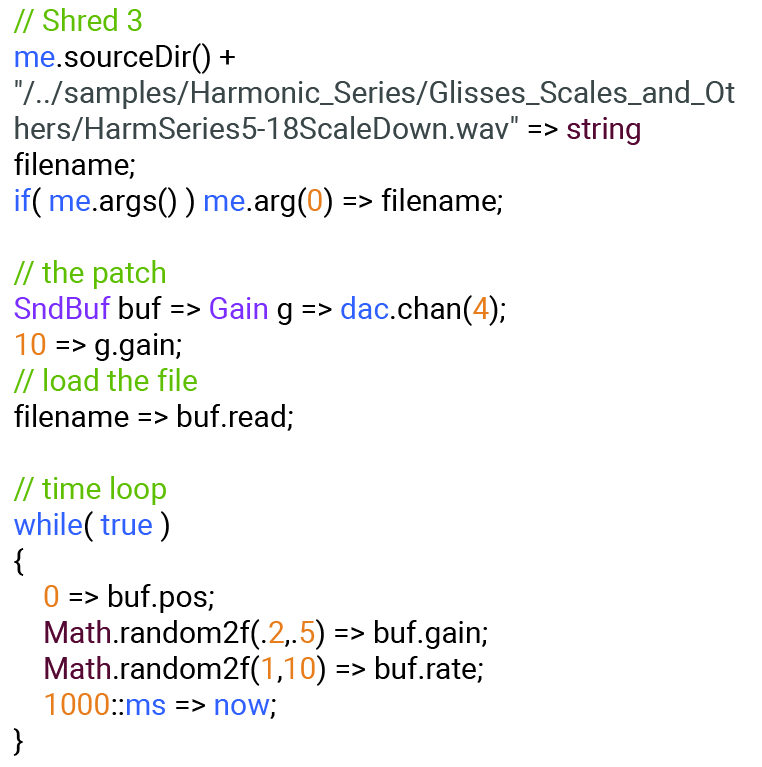

One technique to consider is using recorded samples as a control source. It is the opinion of the author that the blend of both purely electronic and recorded sounds leads to the most interesting musical results. There are no shortage of sampling and audio manipulation techniques using the computer, which will not be discussed as they fall well beyond the scope of this writing. To demonstrate, using a recorded sample from Lou Harrison’s instrument collection, the code plays back the clip and is set up to allow the user control over the playback speed and the gain of each iteration. The result is the blend of a sonically interesting acoustic sound source, changing over time and possessing a wide range of harmonic content, with the analog circuitry of the synthesizer. This can be taken further by utilizing granular synthesis techniques, which is also saved for a future discussion.

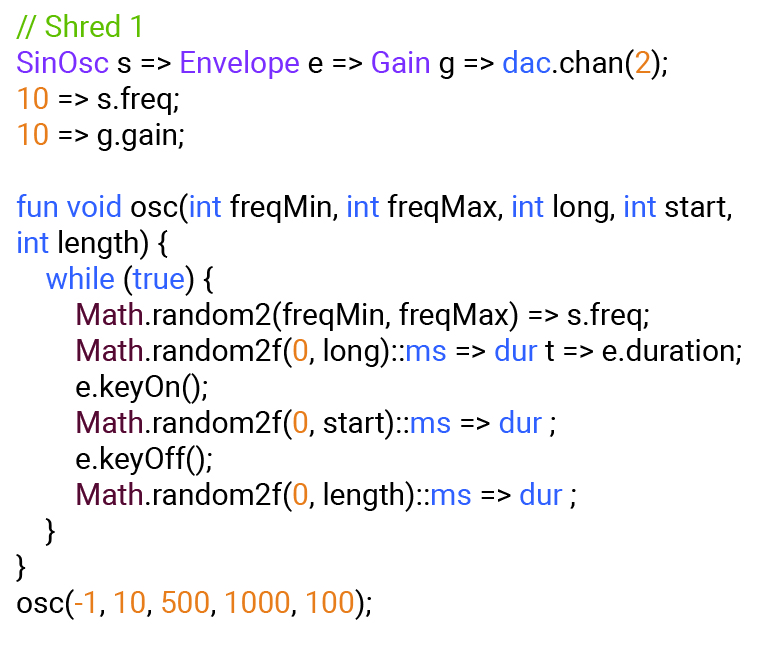

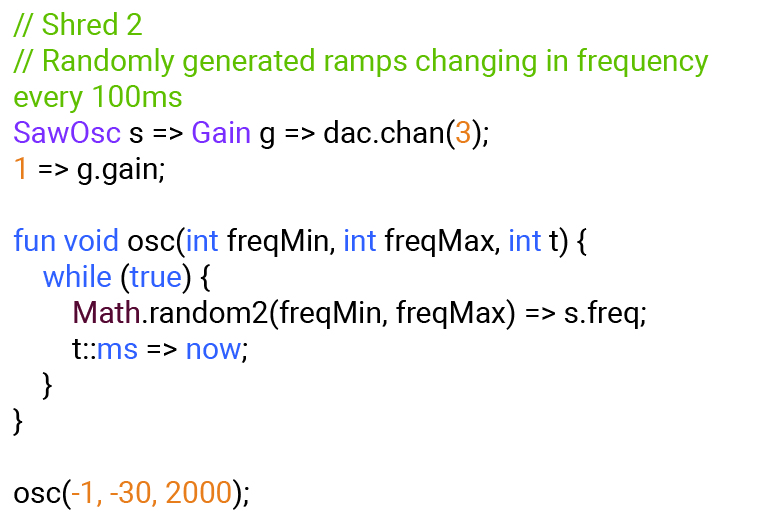

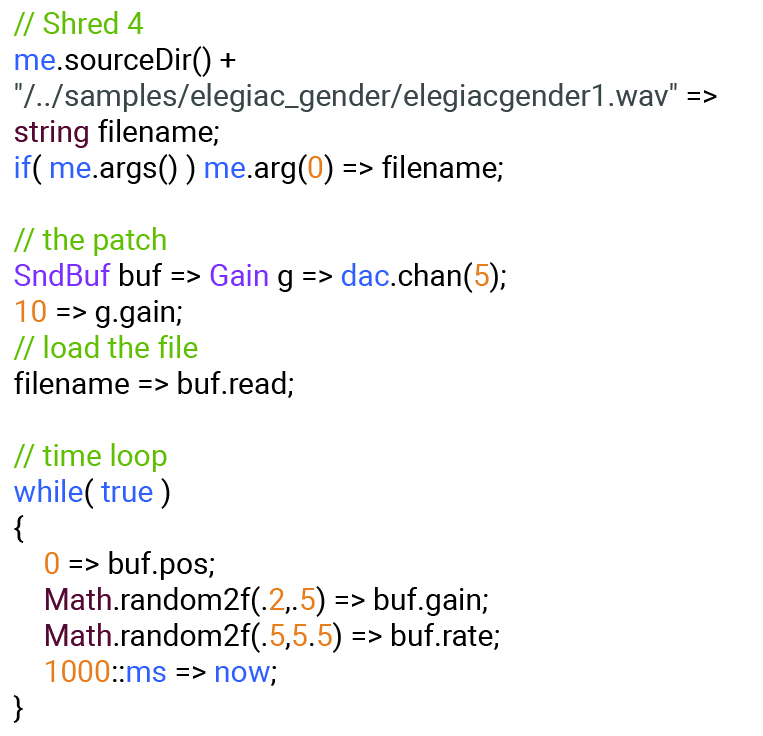

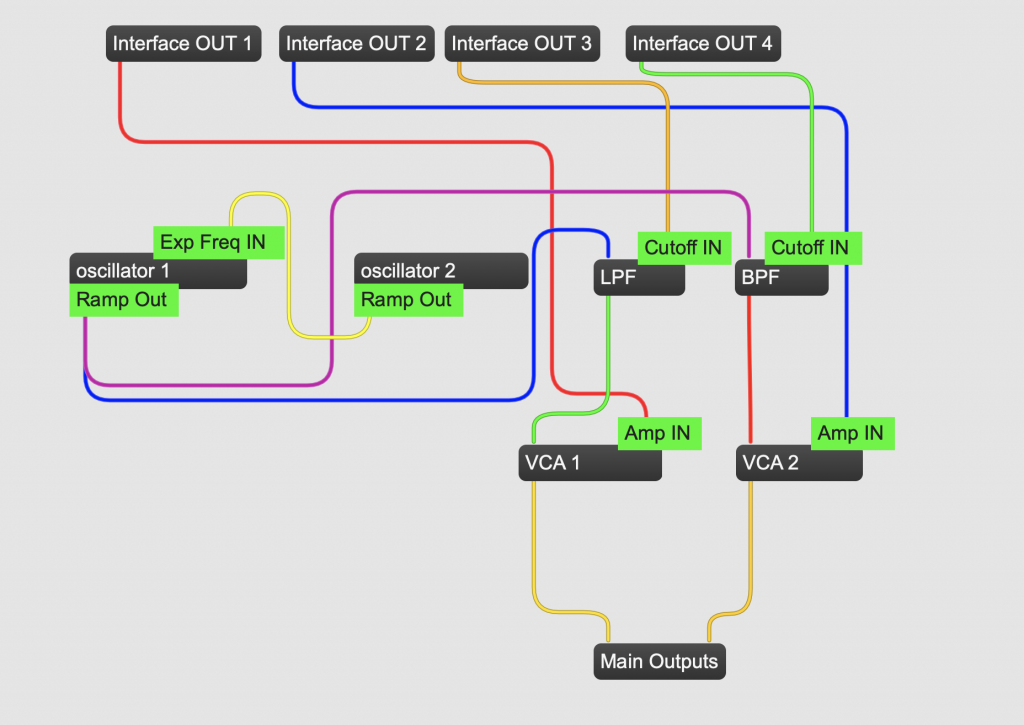

The code blocks below represent a simple piece that links a few shreds in ChucK to a Kilpatrick Phenol desktop modular synthesizer. The synthesizer is equipped with two VCOs, two VCFs (LPF & BPF), two VCAs, and other various utilities. It should be noted that on this particular instrument, all voltages (except for gates) are BIPOLAR. This is usually not the case with many modular synthesizer designs, which is why in this code, negative values are used in order to utilize the full range of the circuit. The user’s synthesizer may be different and as always experimentation is highly encouraged! I will use four shreds of code outlined below to perform this etude.

CONCLUSIONS/FURTHER WORK

A next step on this path could be to flip the relationship and send signals out of the synthesizer and into the computer. Treating and processing CV signals from hardware opens up powerful utility tools that can be programmed in the computer to allow for even more customizable modularity in performance systems. Mapping the modular synthesizer this way opens up the construction of interactive performance environments that can read subsonic structural control voltages, and in turn make decisions based on these forms. And of course, audio processing and effects can be added to enhance the depth of the sound quality of analog circuitry. Simply put, all the techniques outlined in this article could be experimented with in reverse.

Using TouchOSC enables the creation of custom interfaces to interact with the computer, synthesizer, or both. Moving beyond the standard knobs and sliders afforded in modular synthesizers, TouchOSC can allow for XY and multitouch grid controls, grids to change the routing of signals, and trigger presets programmed in the computer, just to name a few. Because OSC can send and receive data as floating-point numbers, it pairs very well with CV generation and processing.

It is the author’s hope that this article has opened up the possibilities to curious readers on how to incorporate modular synthesizers into their live coding sets and vice versa. Expanding both of these tool sets can only serve to enhance the musical experience.

ABOUT THE AUTHOR

Daniel McKemie (né Steffey) is an electronic musician, percussionist, and composer based in New York City. Currently, he is focusing on technology that seeks to utilize the internet and browser technology to realize a more accessible platform for multimedia art. He is also researching on the interfacing of custom software with hardware systems (such as modular synthesizers, mobile phones, and handmade circuitry), to create performance environments that influence each other.

His music focuses on the boundaries of musical systems, both electronic and acoustic, that are on the verge of collapse. The power in the brittleness of these boundaries, often dictates more than the composer or performers can control, which is very welcomed.

Daniel has provided music for and worked with a number of different artists and companies in various capacities including: Roscoe Mitchell, New York Deaf Theatre, The William Winant Percussion Group, Funsch Dance Experience, Ryan Ross Smith, Iceland Symphony Orchestra, The Montreal-Toronto Art Orchestra, Nick Wang, Christian Wolff, Bob Ostertag, and Steve Schick, among others.

More info at: https://www.danielmckemie.com